Thoughts On ... AI-AI-Uh-O: A Month-Long AI Disruption Serial

While the serial novel got its start way back in the 17th century, it did not “get its sea legs” until Victorian era England. In 1836 and 1837, a 24-year-old journalist began publishing a series of related stories called “The Pickwick Papers”.

While the serial novel got its start way back in the 17th century, it did not “get its sea legs” until Victorian era England. In 1836 and 1837, a 24-year-old journalist began publishing a series of related stories called “The Pickwick Papers”.

Barasch - Wellwood Wealth Partners

March 1, 2026

While the serial novel got its start way back in the 17th century, it did not “get its sea legs” until Victorian era England. In 1836 and 1837, a 24-year-old journalist began publishing a series of related stories called “The Pickwick Papers”. “The Papers” and its author – Charles Dickens - became a phenomenon amongst the English, spawning a theatrical version, joke books and other merchandise (Dickens, for his part, would go on to bore school kids for the next 200-years). And so was born an era marked by cliffhangers designed to titillate the reader and leave them wanting more from the “next episode”. This era would last well into the middle of the 20th century before gradually petering out (although, Stephen King did do a great reboot in the mid-1990s with “The Green Mile” series) as other forms of entertainment – radio, comic books, television and movies – took the mantle.

Not to be deterred, we are going to spend the month of March rebooting the serial with a series on the disruption that has been caused by artificial intelligence. We did not set out to do this, but when three pages turned into six and ten into fifteen, we decided that rather than try to take a sledgehammer to the length of the piece (or subject our readers to a tome), we would break it up into bite-sized pieces. We do not promise weekly cliffhangers, but we will do our best to keep you wanting more. We should note that we use AI in our work now and we expect it to become an ever-growing part in the coming years. In fact, some of the charts in this piece were done with an “AI-assist”.

With that in mind, let’s get to it.

Section I: History is rife with anxiety

We often like to think that this time is different. That is – a transformative technology has finally gone too far and millions of people will lose their jobs and the economy will be imperiled as the new technology displaces human workers. Economists call the underlying logical error the "lump of labor fallacy" — the assumption that there is a fixed amount of work (a “lump”) to be done, and that any task claimed by a machine is permanently lost to human workers. History has repeatedly demonstrated that this assumption is wrong, yet the fear resurfaces with each new technology because the initial disruption is very real, even when the long-term outcome is not. Let’s explore a few historic examples:

The Industrial Revolution (1760–1850): The Luddites

Skilled English textile workers (known collectively as “the Luddites”) — weavers and framework knitters who had spent years mastering their craft — faced genuine economic catastrophe as power looms mechanized what had been well-paid artisan work. After their appeals to employers and the government failed, the Luddites resorted to organized, nighttime raids on factories and mills to destroy the new machines, which they viewed as the source of their problems. The British government responded by making machine destruction a capital offense and the Luddite movement effectively ended in 1813 with mass executions.

- The ultimate outcome: Real wages for handloom weavers fell for roughly a generation before the industrial economy absorbed displaced workers into new roles. Textile employment ultimately grew massively — by 1850, Britain employed far more people in textiles than it did in 1800, because mechanization lowered prices, expanded markets, and created enormous demand. But the transition was painful and lasted decades. In other words, short-term fears were justified, but the long-term benefits were massive.

Electrification and the Assembly Line (1910s–1920s)

Henry Ford's moving assembly line, introduced in 1913, transformed automobile manufacturing by breaking complex work into simple, repetitive tasks. Critics argued this "deskilled" work and would create an army of interchangeable, disposable workers.

- The ultimate outcome: Productivity gains were so dramatic that Ford was able to slash car prices by over 60%, creating mass consumer demand that did not previously exist. The automotive sector went on to employ millions across manufacturing, retail, service, and infrastructure. The key mechanism: lower costs created new demand that exceeded the original displacement of workers.

The ATM and Bank Tellers (1970 to Present): Automation's Most Famous Paradox

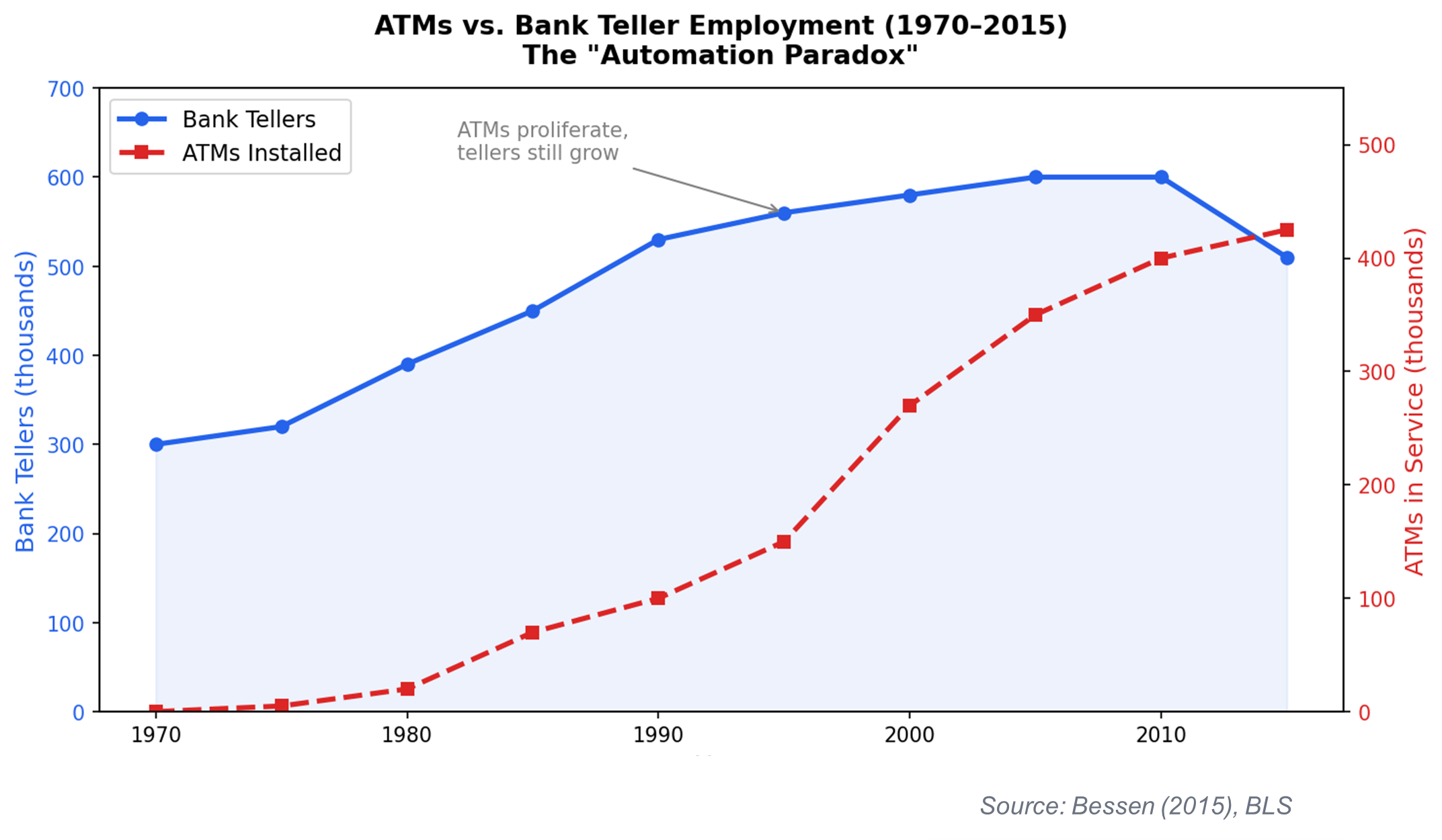

Let’s start with a chart and then comment:

When the first ATM was installed at Chemical Bank on Long Island in 1969, industry observers predicted the machine would steadily eliminate the need for human tellers, as the ATM could do the most common teller tasks faster, cheaper, and around the clock.

- The ultimate outcome: Economist James Bessen of Boston University documented the surprising reality - as ATM deployment accelerated through the 1980s and 1990s — eventually reaching over 400k machines nationwide — bank teller employment roughly doubled from approximately 300k in 1970 to nearly 600k by 2010. Why? ATMs reduced the cost of operating a bank branch, which prompted banks to open more branches, which increased demand for tellers. The average urban bank branch went from 21 tellers to 13, but the number of branches rose 43%. This resulted in more tellers, not fewer.

Okay, with those out of the way, let’s pivot to a more recent historic example – the Internet. Given its proximity to current events, we will dedicate an entire section to this and use it as an opportunity to wind down part one of the piece.

Section II: Al Gore’s folly: The Internet Era (1990 to Present)

Of all the historical analogues to AI, the commercialization of the Internet in the mid-1990s is the most instructive — not because it is perfectly analogous, but rather because it played out within living memory, the fears it generated were remarkably similar, and the gaps between predicted and actual outcomes were so dramatic.

The perfectly reasonable predictions …

By the late 1990s, serious analysts — not just modern-day Luddites — were predicting the Internet would hollow out entire professions:

- Travel agents would be replaced by booking engines (Travelocity launched in 1996).

- Stockbrokers would be replaced by online trading (E*TRADE went public in 1996).

- Physical retail workers would be displaced by e-commerce (Amazon was founded in 1994).

- Bank tellers would become unnecessary as online banking removed the need for branch visits (Royal Bank of Canada begins offering online services in 1995).

- Classified advertising and print journalism would collapse as online forums and news websites launched (Craigslist was launched in 1995).

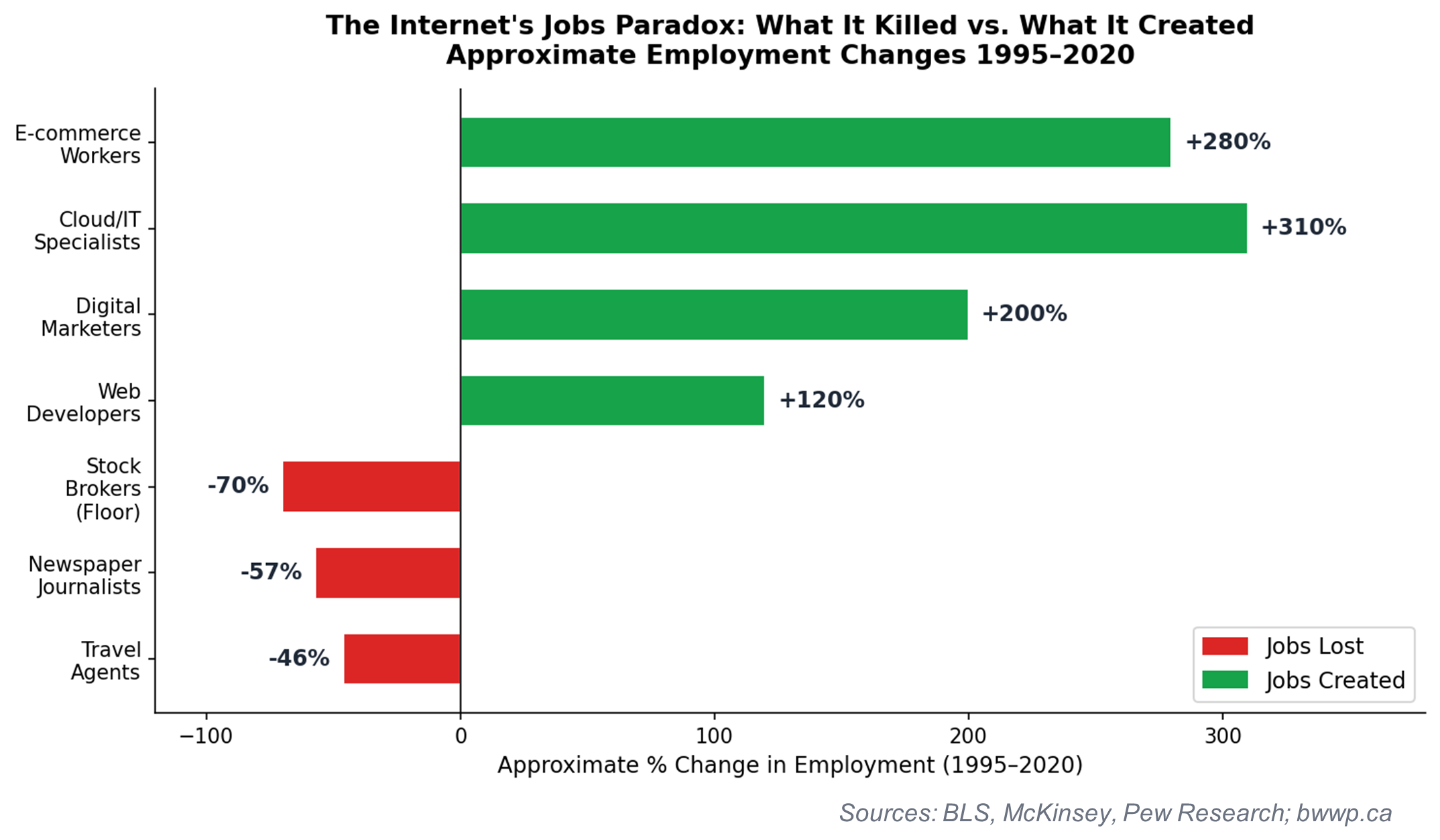

Many of these predictions were directionally correct. Travel agent employment did fall by nearly half over the following decade. Stockbrokers on the floors of exchanges largely disappeared. Classified advertising was almost entirely displaced by digital platforms, devastating newspaper revenue and newsroom employment.

… that ultimately proved mostly wrong

Let’s again start with a chart and then comment:

What forecasters systematically underestimated was the volume of new categories of work the internet would create — roles that simply did not exist and could not have been imagined in 1995:

- Web developers and designers (from ~20,000 in 1996 to over 4 million by 2020 including adjacent roles)

- Search engine optimization specialists, social media managers and influencer marketers

- Cloud infrastructure and DevOps engineers

- E-commerce operations, logistics, and last-mile delivery workers

- Data scientists, analytics professionals, and growth hackers

- Content creators, podcasters, and streaming platform workers

Thus, the Internet ended up being both a job destroyer, but also a massive job creator. While the human mind has the capacity to foresee the former – this new technology is going to displace a lot of jobs – it is less effective at imagining the latter – this new technology is going to create far more jobs that did not previously exist.

Okay, let’s end part one there as we can tell some eyes are probably glazing over. Next week (cliffhanger time), we will look out how AI is coming for your job and how something called “the J-curve” might predict what is going to happen.